Evals for agents: the missing bridge between “correct” and “reliable”

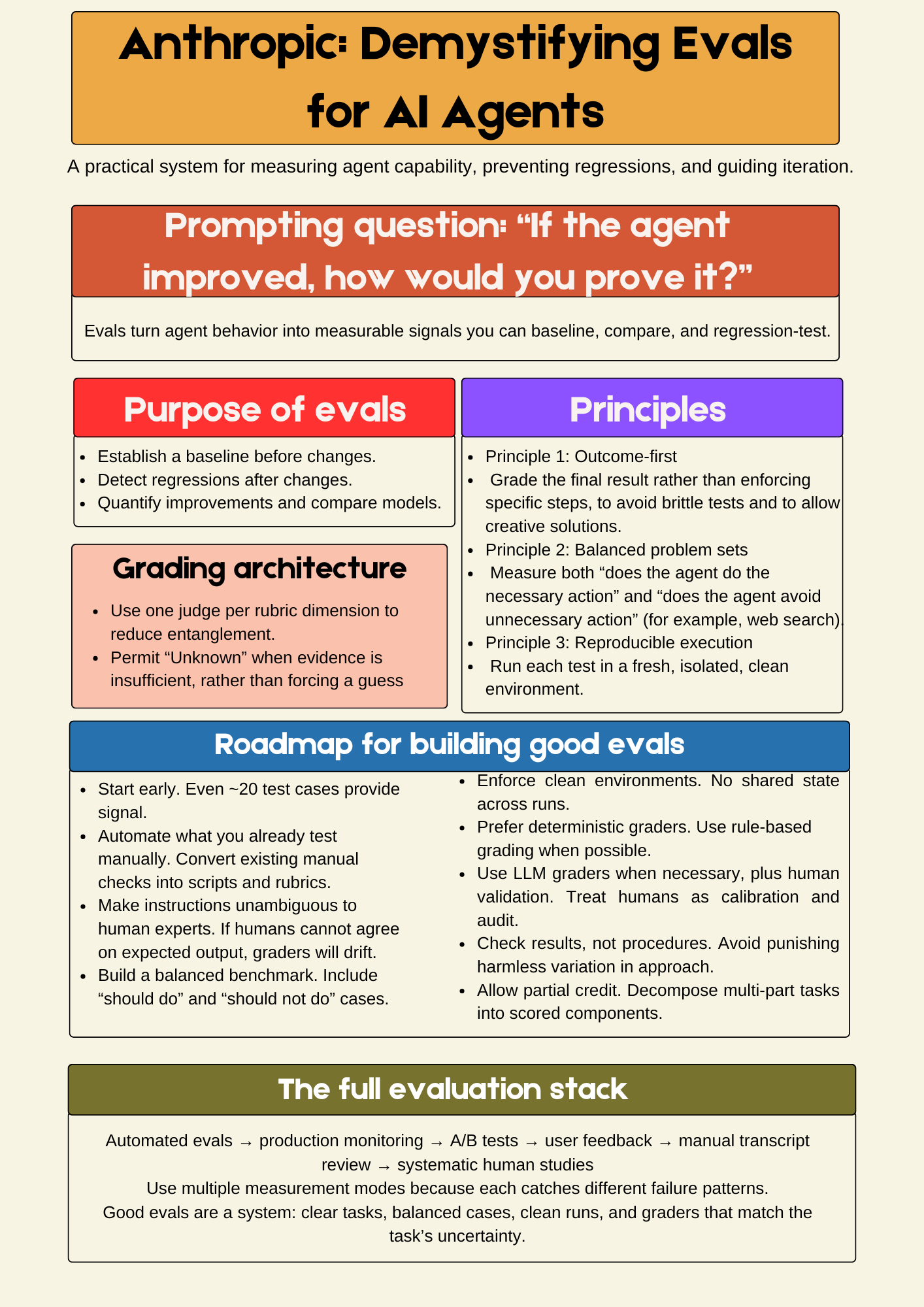

A printable poster is included, summarizing the evaluation roadmap and key metrics at a glance.

The first time I tried to “improve” an agent, I shipped a change that looked strictly better in a handful of spot checks.

A week later, a user hit a failure mode that my spot checks never exercised, and I had no principled way to argue whether it was a regression or a new edge case.

That experience is why I pay attention when an evals post is explicit about mechanics.

Anthropic’s Demystifying evals for AI agents is explicit about the parts that matter in practice: tasks, trials, transcripts, outcomes, graders, and the eval harness.

The reframing that changed how I read the post

Anthropic makes a clean distinction that many teams blur: the transcript is what the agent says and does, while the outcome is the final state of the environment.

That distinction explains a common failure: the agent says “done,” and a human believes it, while the database or UI state is unchanged.

In my own work, the most useful mental model has been a two-axis rubric:

Correctness: are the agent’s claims and actions justified by evidence and constraints.

Completeness: did the agent cover the required components of the task, with no missing obligations.

I have found that teams often build graders for correctness first because it is easier to imagine “wrong answers.”

Completeness is harder because it requires an explicit inventory of what must be present, and that inventory is usually implicit in someone’s head.

Anthropic’s advice on research evals maps directly onto this: groundedness checks are correctness, coverage checks are completeness, and source-quality checks constrain what counts as evidence.

That alignment is the bridge I needed between “nice principles” and “buildable evals.”

A concrete method: turn free text into atomic claims, then grade each claim

In earlier posts, I have been exploring “atomic requirements” or “atomic claims” as an evaluation primitive.

The idea is simple: free text is ambiguous, and graders fail when the unit of evaluation is too large.

Here is the procedure I actually use.

Step 1: Atomize the spec

Take a task description and extract a small list of claims that would let two experts agree on pass or fail.

Example task text: “Draft a user-facing explanation and include sources.”

Atomic claims:

The answer contains a direct response to the user’s question.

The answer cites sources for factual claims.

The sources are relevant to the claims they support.

The answer covers the key topics required by the prompt.

This is the completeness inventory.

It is short on purpose, because long rubrics create disagreement and grading drift.

Step 2: Assign one grader per claim

Anthropic recommends isolating rubric dimensions and letting a judge return “Unknown” when evidence is insufficient.

I have found this is the difference between “LLM grader theater” and a grader you can debug.

So you might implement:

Deterministic grader for “contains at least one citation marker.”

LLM grader for “citations support the specific claims,” with an allowed “Unknown.”

LLM grader for “coverage of key topics,” calibrated against a few human-scored examples.

Step 3: Compose scores with partial credit

Anthropic explicitly recommends partial credit for multi-component tasks.

Atomic claims make partial credit meaningful, because “missed one required component” becomes a visible failure mode rather than a vague “felt incomplete.”

This is also where “correctness vs completeness” becomes operational.

You can separate “unsupported claim” from “missing required topic,” and they suggest different fixes.

Why “grade outcomes, not paths” is more than a slogan

There is a strong instinct to test that the agent followed a specific tool sequence.

Anthropic argues this is brittle and punishes valid creativity, and recommends grading what was produced, not the path.

My program-analysis instinct agrees with this for a technical reason.

Path constraints are an unstable interface because they couple the eval to incidental implementation choices inside the agent harness.

Outcome-first grading gives you a stable contract.

You can change prompting, tool selection logic, or decomposition strategy, while keeping the same definition of success.

This does not mean “ignore the transcript.”

Anthropic explicitly says you must read transcripts to validate graders and catch unfair grading.

In practice, outcome grading is your gate, and transcript review is your debugger.

The reliability question that pass@k and pass^k forces you to answer

Agents are non-deterministic across runs, so a single success rate hides product-relevant differences.

Anthropic’s pass@k and pass^k framing is valuable because it forces a decision: are you building a tool that can be retried, or a behavior users expect to be consistent.

I treat it as a product requirement, phrased as a yes-no question:

Is a retry acceptable to the user?

If yes, optimize pass@k.

If no, optimize pass^k, and assume you will need stronger specs and more deterministic graders.

This small decision changes what you measure, what you ship, and how fast you can iterate.

What actually changed for me after reading this

The post did not convince me that evals matter.

It gave me a workable decomposition of where ambiguity lives.

Ambiguity lives in tasks that two experts would grade differently.

Ambiguity lives in graders that cannot say “Unknown” and therefore hallucinate certainty.

Ambiguity lives in suites that only test one direction of a behavior and accidentally train overtriggering or undertriggering.

My current working rule is: if I cannot express the eval as a small set of atomic claims plus graders, then I am still in “manual testing mode,” even if I have a benchmark name and a score.

If you can name the exact claim that failed and the grader that detected it, you have turned agent reliability from guesswork into engineering.