Atomic claims as an evaluation primitive

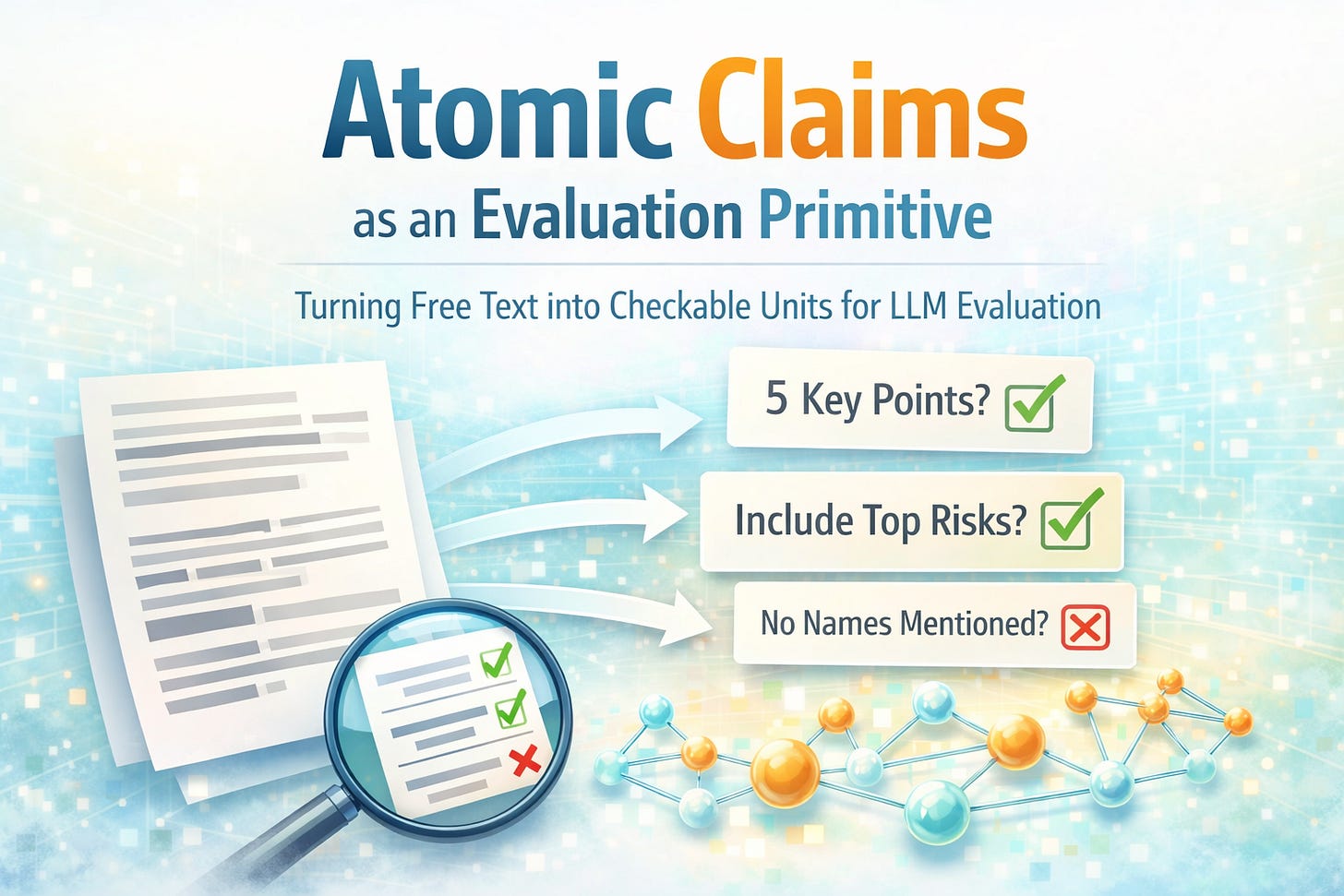

Turning free text into checkable units for LLM evaluation

A model output looks reasonable, a judge prompt approves it, and a human reviewer still finds a clear miss.

This happens in code review, summarization, policy compliance, data transformation, and task planning.

The shared failure is structural: the evaluation never made the underlying claims explicit, so the judge did not systematically check them.

This post distills one idea from my recent paper that generalizes well: treat evaluation as checking a set of atomic claims rather than judging a whole artifact at once.

The problem: free text hides what must be checked

Many real evaluations use free text as the reference.

That reference might be a specification, a rubric, a policy, an instruction block, or a source document.

Free text is compact and human-friendly.

It also bundles multiple obligations, constraints, and conditions into single sentences.

When an LLM is asked to evaluate against that text in one shot, it has to do two jobs:

infer what the claims are

verify whether each claim holds

Those two jobs compete for attention.

The result is predictable: some claims are never checked.

A lens: evaluation is coverage over a claim set

Any “correctness” judgment implies a set of checkable statements.

Examples:

The output includes required elements.

The output respects specific constraints.

The output does not introduce unsupported assertions.

The output handles a stated edge case.

The output follows formatting rules.

If this claim set is not explicit, evaluation becomes a partial search.

The judge will focus on a few salient points and ignore the rest.

Definition: atomic claim

An atomic claim is a minimal, indivisible statement that can be checked independently.

An atomic claim should map to a single yes-or-no question.

If verifying the statement requires multiple distinct questions, it is not atomic.

Example

Reference sentence:

“Summarize the report in 5 bullets, include the top 3 risks, and avoid naming individuals.”

This is three atomic claims:

Output is exactly 5 bullets.

Output includes the top 3 risks.

Output does not name individuals.

Keeping it as one sentence encourages the judge to respond with an overall impression.

Splitting it forces explicit checks.

Why atomicity helps LLM evaluation

Atomic claims shift the workload from implicit interpretation to explicit verification.

This matters because LLMs are more reliable when asked focused questions with clear boundaries.

Atomic claims improve evaluation in three ways.

1) Higher coverage

Each claim is a unit of work.

If you have 20 claims and you check all 20, you have evidence of coverage.

With holistic judging, coverage is unknown.

2) Lower ambiguity

Compound sentences often hide conditional logic.

Atomic rewriting makes scope clearer, such as what counts as an exception, what is mandatory, and what is optional.

3) Better error localization

When a check fails, you learn which claim failed.

This makes feedback actionable and makes regression tracking possible.

Turning free text into atomic claims

You can treat this as a small compilation step.

Input: messy free text.

Output: a list of checkable claims.

A practical procedure:

Extract sentences or bullet points from the reference.

Split on conjunctions and multi-action verbs (and, or, unless, except, then).

Normalize each fragment into a standalone statement with one verb.

Validate atomicity by asking: “Can I check this with one question?”

Tag each claim with a type, such as format, content, constraint, or edge case.

Example prompt

You are given a piece of reference text.

Break it into atomic claims.

Rules:

– Each claim must express exactly one obligation, constraint, or condition.

– Each claim must be checkable with a yes/no question.

– Do not merge multiple actions into one claim.

– Preserve the original meaning.

Output one claim per line.

What changes in practice

The evaluation prompt changes from:

“Judge whether this output satisfies the reference.”

To:

For each claim, decide satisfied or not satisfied, and cite evidence from the output.

This changes the judge’s behavior.

It reduces the chance that the model “forgets” to check a detail, because the checklist is explicit.

Generality across LLM applications

Atomic claims are useful whenever a system compares an output to text.

Summaries versus sources: atomic claims are key facts.

Policy compliance: atomic claims are individual rules.

Code generation: atomic claims are testable behaviors.

Data tasks: atomic claims are schema and transformation constraints.

Instruction following: atomic claims are discrete tasks implied by the prompt.

The artifact changes.

The primitive stays the same.

Why this matters

As teams rely on LLMs to judge LLM outputs, evaluation errors compound.

Holistic judging fails quietly because it does not reveal what went unchecked.

Atomic claims make evaluation measurable.

They convert “does this look right” into “which statements are verified.”

In later posts, I will describe what happens after atomization, because combining many atomic checks introduces its own failure modes worth understanding.

This is wonderful Khaled. I had never head of atomic claims before, but this seems like a fantastic way to stress test an agent. 🙏